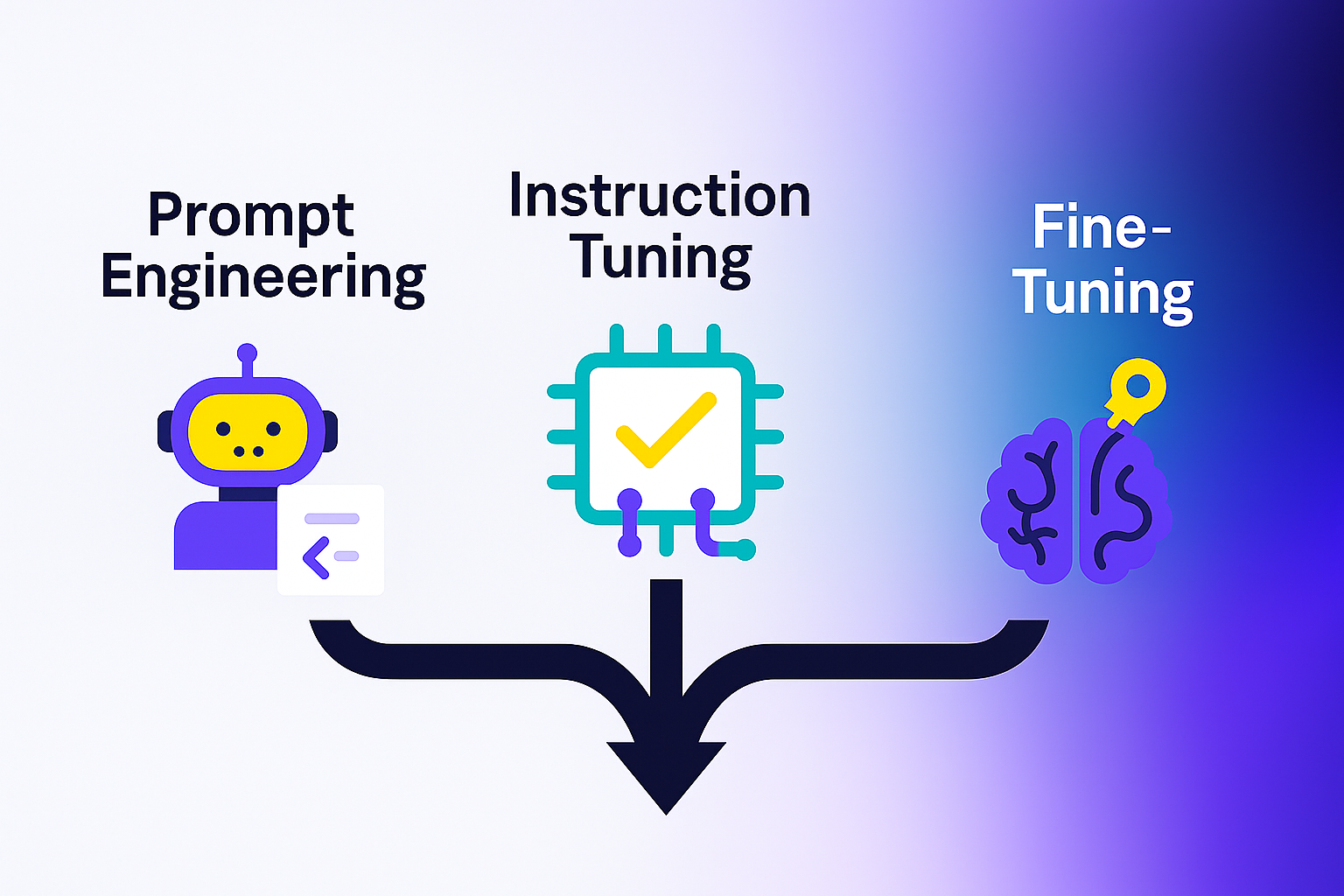

Fine-Tuning | Prompt Eng. | Instruction Tuning

Confused by all the ways to personalize LLMs? We break down fine-tuning, prompt engineering, and instruction tuning—so you know what, when, why?

Understanding the Customization Spectrum

As large language models (LLMs) mature, customizing them has gone from "nice to have" to business-critical. But with so many options—prompt engineering, instruction tuning, fine-tuning, LoRA, QLoRA, adapters—the decision tree can quickly become overwhelming.

Let’s demystify the landscape.

What Are They, Exactly?

MethodDefinitionPrompt EngineeringDesigning precise, structured inputs to steer LLM output behaviorInstruction TuningTraining models on a variety of instructions to generalize across tasksFine-TuningRe-training parts (or all) of the base model on custom labeled dataLoRA / QLoRALightweight fine-tuning via low-rank adapters—cheap and memory-efficient

Key Tradeoffs to Consider

FactorPromptingInstruction TuningFull Fine-TuningLoRA / QLoRACostNoneHighVery HighLowLatencyLowMediumMediumLowData NeededNone1K–10K examples50K+1K–5KCustom BehaviorShallowGeneralizedDeepTargetedDev ExperienceEasiestHarderComplexModerate

What Should You Use—and When?

Use Prompt Engineering when:

- You need speed and don’t control the model

- Use cases are simple or exploratory

- Example: marketing copywriter, simple chatbot, internal search

Use Instruction Tuning when:

- You want a flexible generalist

- You're building a developer platform or agentic tool

- Example: a model that can summarize, translate, and classify with equal ease

Use Fine-Tuning / LoRA when:

- You need deep domain alignment or structured output

- You have control of infrastructure or use open-source models

- Example: legal document summarizer, customer support LLM, medical QA bot

“Don’t reach for a hammer when a prompt will do the job.”

— NovaLuna Labs, Applied AI Team

What’s Emerging?

- Mixtral Adapters: Modular, parameter-efficient tuning method used in mixture-of-experts models.

- QLoRA: Memory-efficient fine-tuning at full 16–32-bit performance, great for edge deployment.

- Prompt Compression: Agents now learn to shorten prompts, cutting cost and latency.

Final Thoughts

There’s no one-size-fits-all. Your LLM customization method should align with:

- The complexity of your task

- Your budget and infrastructure

- Your team’s technical capability

At NovaLuna, we often recommend:

- Prompt engineering → for prototypes

- LoRA → for early production

- Full fine-tuning → for scale-critical, regulated use cases

Make your customization strategy deliberate—not expensive.

Subscribe to our newsletter today

Get the latest insights on Generative AI, automation breakthroughs, and enterprise-ready LLM tools—delivered straight to your inbox.